Bot Hound uses AI to classify every sampled follower in an account’s list. But what does that actually look like? What kinds of accounts does it catch, and just as importantly, what does it leave alone?

Here are some real examples from actual scans. These show how the AI handles impersonation, lure bots, holistic profile analysis, and the tricky cases where a less careful tool would get it wrong.

Impersonation: The Hard Problem

Impersonation bots are some of the most common fakes on X, and one of the hardest categories for rule-based tools to catch. The account might have a profile photo, a bio, and a plausible name. It might be impersonating with its profile avatar but not explicitly mention the impersonated person (or organization) by name, or slightly misspells the name to throw off rule-based detection. You have to actually recognize the person being impersonated and subjectively determine that the account is actually impersonating them, and that’s where things get interesting.

Famous People

This account uses the name and photo of comedian Matt Rife. No bio, no posts, brand new account. A rule-based tool would see “86 following, 1 follower” and might flag the ratio, or might not. It wouldn’t know who Matt Rife is.

Bot Hound flagged this as impersonation of a known public figure. Zero posts, stolen photo, random digits in username.

Bot Hound flagged this as impersonation of a known public figure. Zero posts, stolen photo, random digits in username.

Bot Hound’s AI recognizes the name and face, connects them to a real public figure, and flags it as impersonation. This works across thousands of celebrities, politicians, athletes, and public figures without anyone maintaining a manual list. The model just knows who these people are.

Relatives, Associates, Organizations, etc

Often fake accounts don’t just impersonate famous people - they use proximity to famous people. This account claims to be “Son of Elon Musk” and uses a photo of Saxon Musk. It’s not impersonating Elon directly. It’s impersonating a family member who most people wouldn’t recognize.

Claims to be “Son of Elon Musk,” posts nothing but Elon retweets. The AI connected the claim, the photo, and the behavior pattern.

Claims to be “Son of Elon Musk,” posts nothing but Elon retweets. The AI connected the claim, the photo, and the behavior pattern.

A rule-based tool won’t catch this. Bot Hound’s AI makes the connection between the bio claim, the profile photo, and the retweet pattern, and flags it.

Unicode Tricks to Dodge Detection

This account impersonates Cointelegraph, one of the largest crypto media outlets. The display name reads “Ć__ointelegraph♤£_” with the C replaced by a diacritical variant and symbols inserted to break string matching. It uses Cointelegraph’s logo, copies its bio (“Trusted crypto media since 2013”), and links to a suspicious Telegram group.

The name reads as “Cointelegraph” to a human, but uses unicode substitution and symbols to evade keyword filters. 89 followers vs the real account’s 2M+. The AI saw through it.

The name reads as “Cointelegraph” to a human, but uses unicode substitution and symbols to evade keyword filters. 89 followers vs the real account’s 2M+. The AI saw through it.

A detection tool searching for “Cointelegraph” would never match “Ć__ointelegraph♤£_.” That’s the whole point. The name is designed to look right to a human scrolling past while dodging automated string matching. The AI reads the visual context, the bio, the avatar, and the suspicious Telegram link together, and flags it.

Flirt and Lure Bots

These accounts use suggestive bios and attractive photos to lure people into DM conversations, usually ending in scam links or paid content pitches. They follow thousands of accounts hoping for a follow-back and a curious click.

The bio doesn’t scream “bot” - just emojis and “Old account blocked, hit me here.” But the posts tell the real story: promoting Zangi numbers, posting “Am real guys” (never a good sign), and pushing people to message strangers. 700 following, 79 followers, account created September 2025.

The bio doesn’t scream “bot” - just emojis and “Old account blocked, hit me here.” But the posts tell the real story: promoting Zangi numbers, posting “Am real guys” (never a good sign), and pushing people to message strangers. 700 following, 79 followers, account created September 2025.

The AI reads the full picture: the messaging app promotion pattern across multiple posts, the suggestive media, the “old account blocked” backstory that lure bots use to explain away being new, and the high following count from mass-follow campaigns. Each piece is circumstantial. Together they’re a clear pattern.

What It (Rightly) Doesn’t Flag

This part matters just as much. A tool that flags everything suspicious isn’t useful. It’s just noisy. Bot Hound is specifically designed to avoid false positives on accounts that might look suspicious on the surface but are clearly real.

Coherent Profiles Read as Human

Bot Hound reads the bio, avatar, username, banner image, and many other features together. When they tell a consistent story, that’s a good indication of a real person.

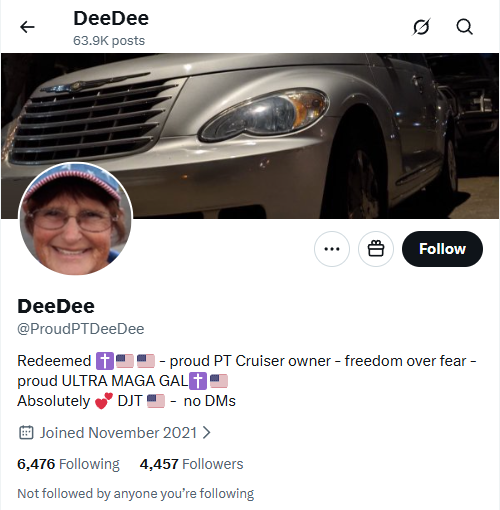

The AI connects the username (ProudPTDeeDee), bio (“proud PT Cruiser owner”), avatar (personal photo), and banner (PT Cruiser) into a coherent picture. Everything aligns. Score: 0.0 - definitively human.

The AI connects the username (ProudPTDeeDee), bio (“proud PT Cruiser owner”), avatar (personal photo), and banner (PT Cruiser) into a coherent picture. Everything aligns. Score: 0.0 - definitively human.

No single field here proves anything on its own. But the AI reads the full profile as a connected narrative and sees a real person with a real hobby. That holistic reading is what separates contextual AI from checklist-based tools.

Joke Names Don’t Trigger It

This account is called “Robot Person” with the parenthetical “Misogynousos Oviedo.” The username is @RobotPerson78. A naive bot detector might flag the name alone. Bot Hound doesn’t.

Despite the name literally containing “Robot,” the AI reads the full profile: 9,886 posts, real engagement, opinionated retweets, and a clearly human posting pattern. Not flagged.

Despite the name literally containing “Robot,” the AI reads the full profile: 9,886 posts, real engagement, opinionated retweets, and a clearly human posting pattern. Not flagged.

The AI understands that a funny display name is not a bot signal. It looks at the actual behavior, the content, and the engagement patterns. This account posts like a real person because it is one.

Parody Accounts Aren’t Impersonation

This is “Keir Jong Un,” a parody account mocking UK Prime Minister Keir Starmer. It uses a photoshopped image combining Starmer’s face with North Korean imagery. It has 3,291 followers and an active posting history. It doesn’t carry X’s official Parody label.

A parody of a world leader with a photoshopped avatar and no official Parody label. The AI distinguishes this from impersonation based on the satirical bio, the community engagement, and the lack of deceptive intent.

A parody of a world leader with a photoshopped avatar and no official Parody label. The AI distinguishes this from impersonation based on the satirical bio, the community engagement, and the lack of deceptive intent.

A less sophisticated tool might see “uses a politician’s likeness” and flag it as impersonation. Bot Hound reads the context: the bio is openly satirical (“All Hail Our Supreme Leader”), the account has real followers with real engagement, and the intent is comedy, not deception. It gets classified correctly as a real account.

Why This Matters at Scale

You could probably catch most of these accounts yourself if you clicked through them one at a time. The problem is that you can’t do that for 10,000 followers. Or 100,000.

Bot Hound runs this same analysis across every sampled follower in an account’s list. The impersonation detection, the lure pattern recognition, the holistic profile reading, the false positive avoidance, all of it runs automatically at scale.

The result is a report that tells you what percentage of an account’s audience is real, with per-account evidence for every flagged follower. No manual review required (though you can always drill into individual accounts if you want to verify).

Run a Bot Hound bot check on any public account and see what it finds.